Our workshop "Event-Based Multimodal Vision (EBMV)" has been accepted by ECCV'26.

Haoyue Liu 刘昊岳

Postdoctoral Researcher

1037 Luoyu Road,School of Artificial Intelligence and Automation,

Huazhong University of Science and Technology (HUST),

Wuhan, China, 430074

Email: liuhy@hust.edu.cn

Biography

Biography and research interests.

I am currently a postdoctoral researcher at Huazhong University of Science and Technology (HUST). Previously, I received my Ph.D. degree in Artificial Intelligence from HUST in 2025, under the supervision of Prof. Luxin Yan and Prof. Yi Chang. I obtained my M.S. and B.E. degrees from China University of Mining and Technology (CUMT).

My current research interests include event-based vision, low-light and high-dynamic-range image imaging, and dynamic scene perception. My research aims to develop robust imaging and perception methods for challenging scenarios with extreme illumination, high-speed motion, and limited sensing resources.

News

Recent updates.

Three papers on event-based low-light imaging, turbulence mitigation, and object tracking have been accepted by CVPR'26, including one Highlight.

We won 1st place in the track 'Event-Based Pose Estimation' in the CVPR'26 SPARK AI4Space Challenge.

Our diffusion-based optical flow paper has been accepted by NeurIPS'25 (Spotlight).

I was invited to give an online talk titled “Event-based High-Speed and High Dynamic Range Imaging” at the 18th Member Sharing Forum of the China Society of Image and Graphics (CSIG).

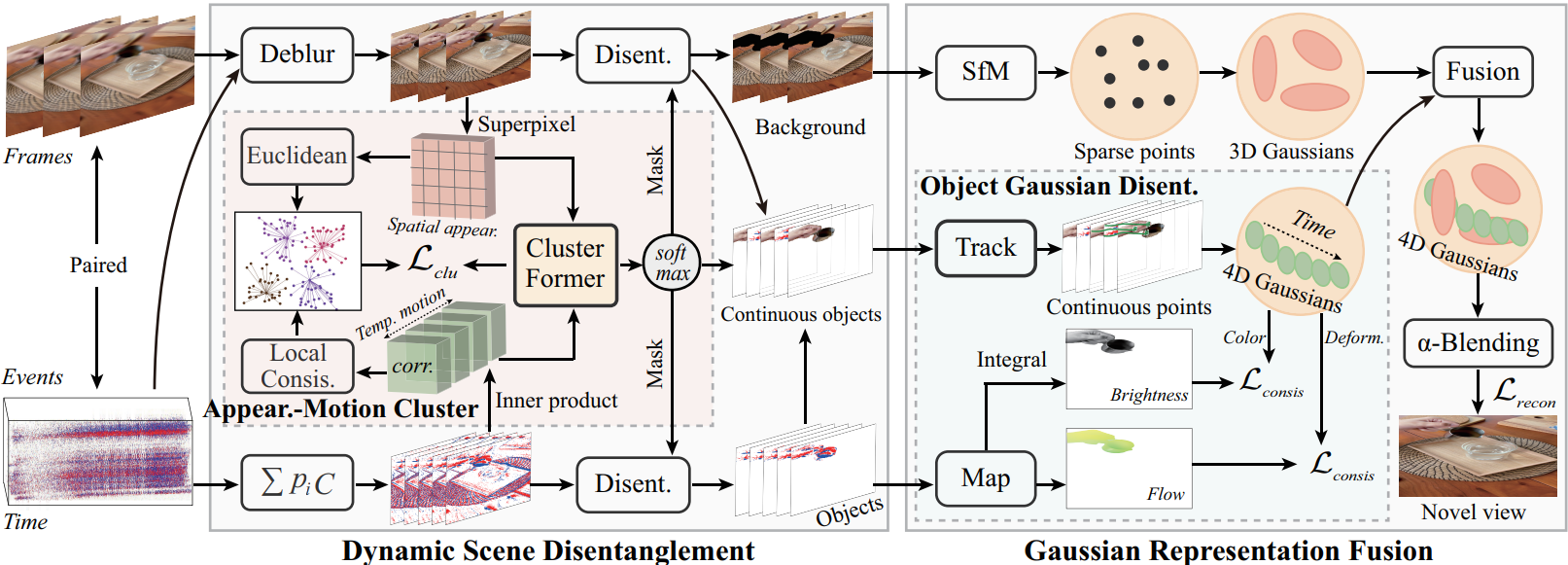

Our event-based 4D Gaussian splatting paper has been accepted by ICCV'25.

Our event-based video frame interpolation paper has been accepted by CVPR'25.

Our event-based dense and continuous optical flow paper has been accepted by CVPR'25.

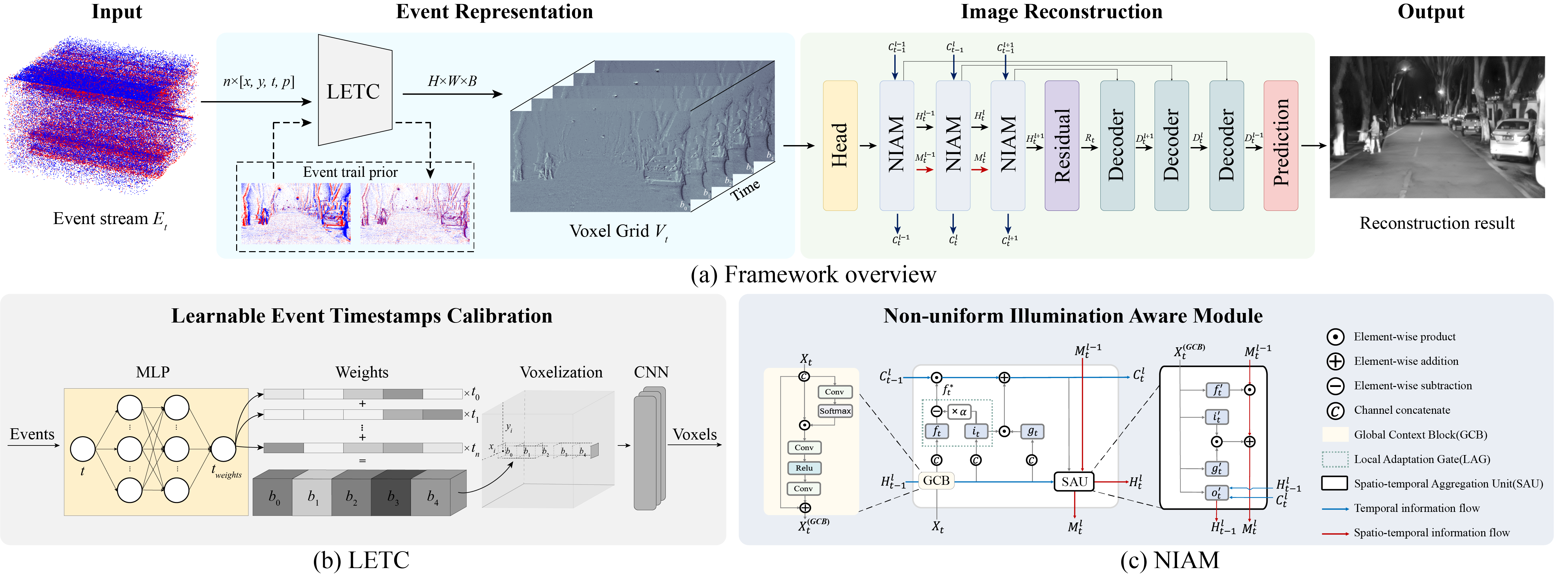

Our work for event-based low-light imaging has been accepted by TPAMI'25.

Our work for event-based nighttime HDR imaging has been accepted by CVPR'24.

Our work for event-based nighttime optical flow estimation has been accepted by ICLR'24 (Spotlight).

We won 1st place in the track 'Atmospheric Turbulence Mitigation' in the CVPR'23 UG2+ Challenge.

Publications

Published and unpublished works.

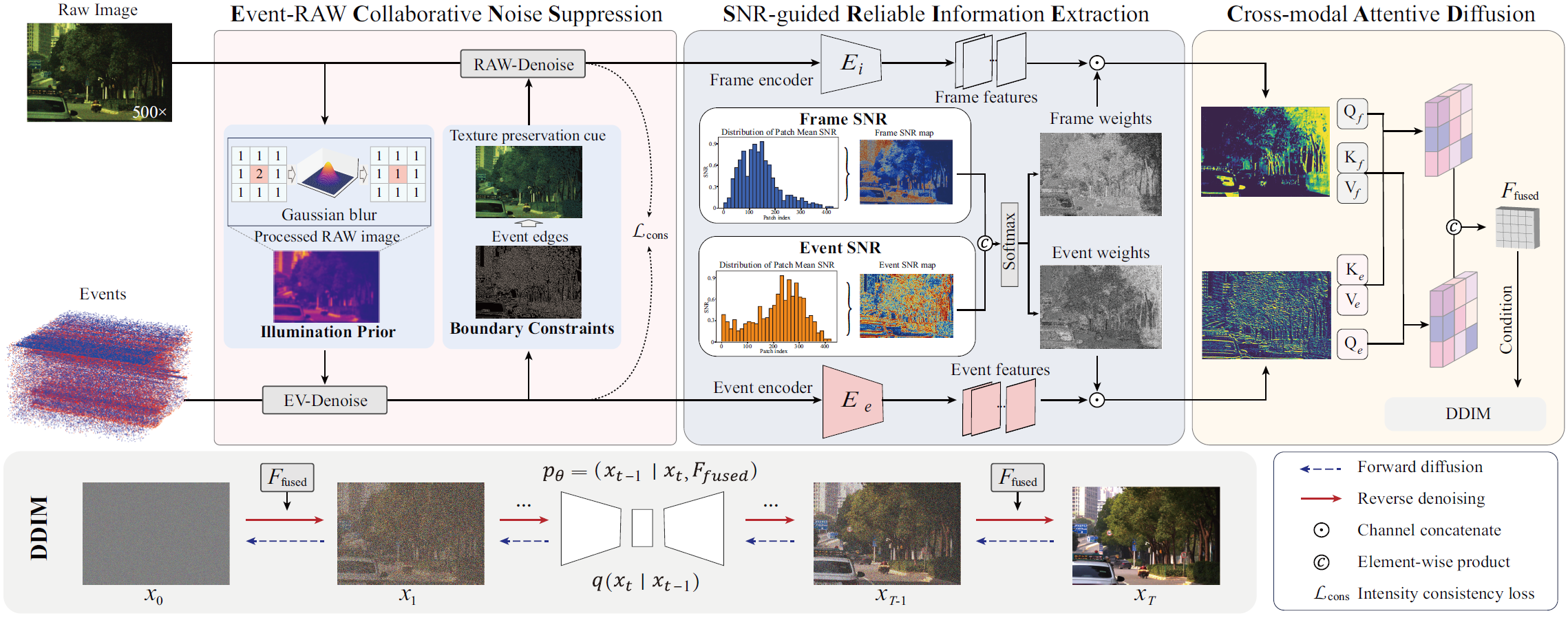

NEC-Diff: Noise-Robust Event-RAW Complementary Diffusion for Seeing Motion in Extreme Darkness

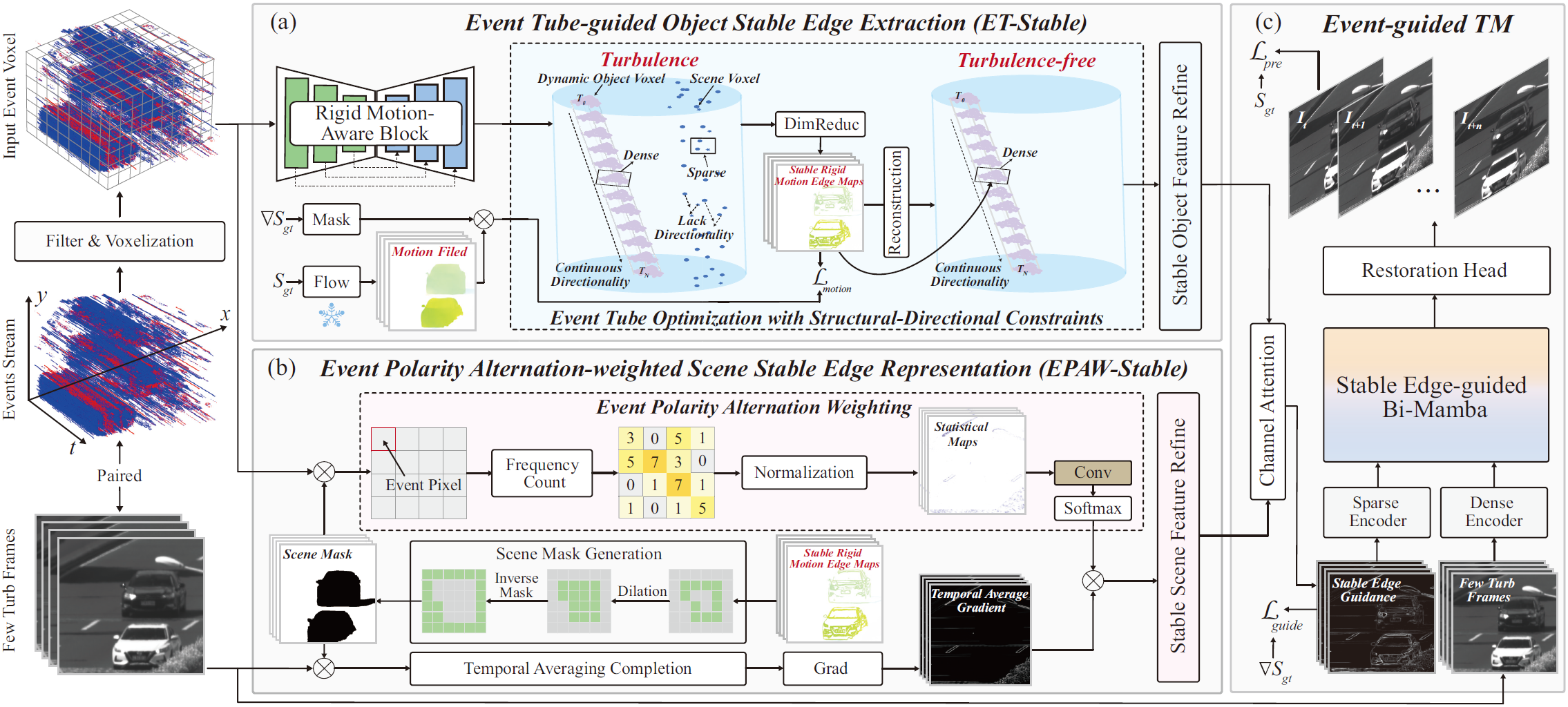

High-Quality and Efficient Turbulence Mitigation with Events

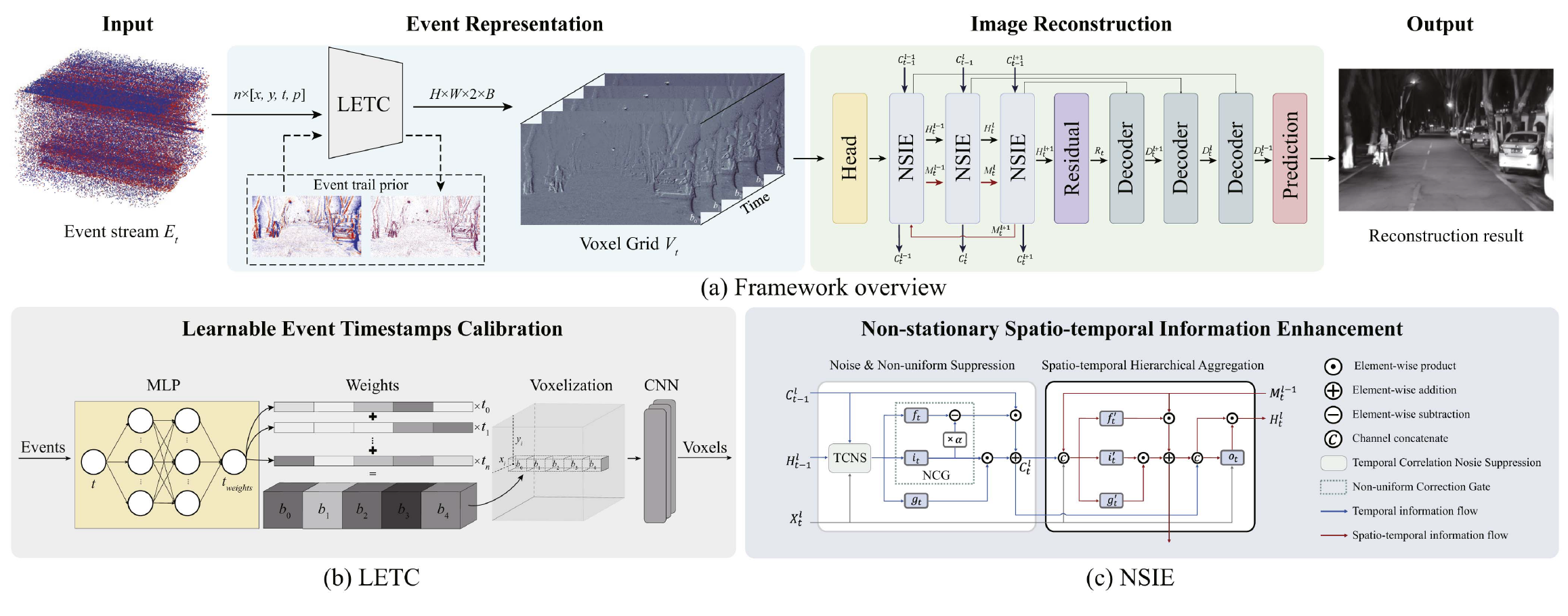

NER-Net+: Seeing Motion at Nighttime with an Event Camera

TimeTracker: Event-based Continuous Point Tracking for Video Frame Interpolation with Non-linear Motion

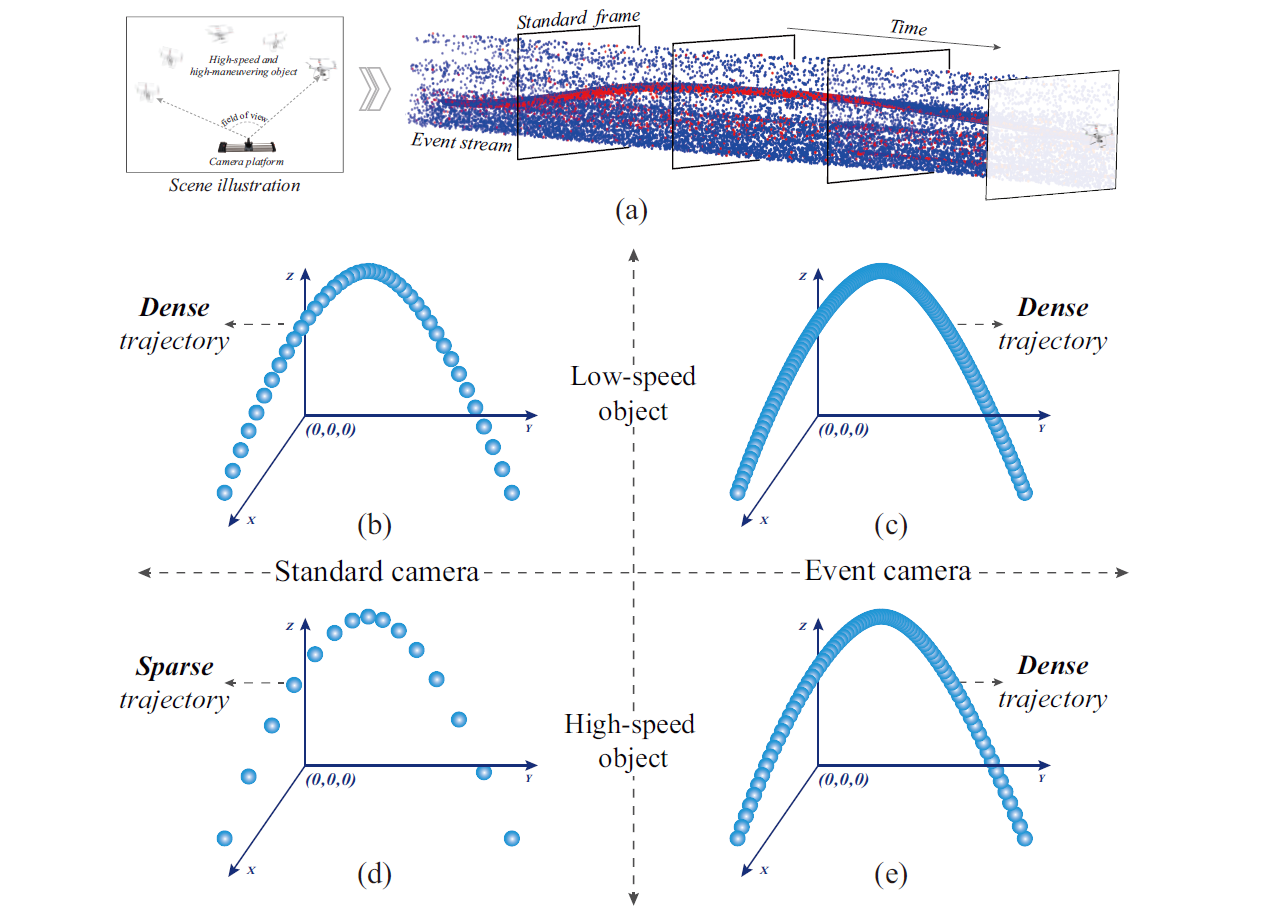

Event-Structured Modeling and Detection of Faint Space Objects with a Dynamic Vision Sensor

Seeing Motion at Nighttime with an Event Camera

Tracking through Severe Occlusion via Event-Derived Transient Cues

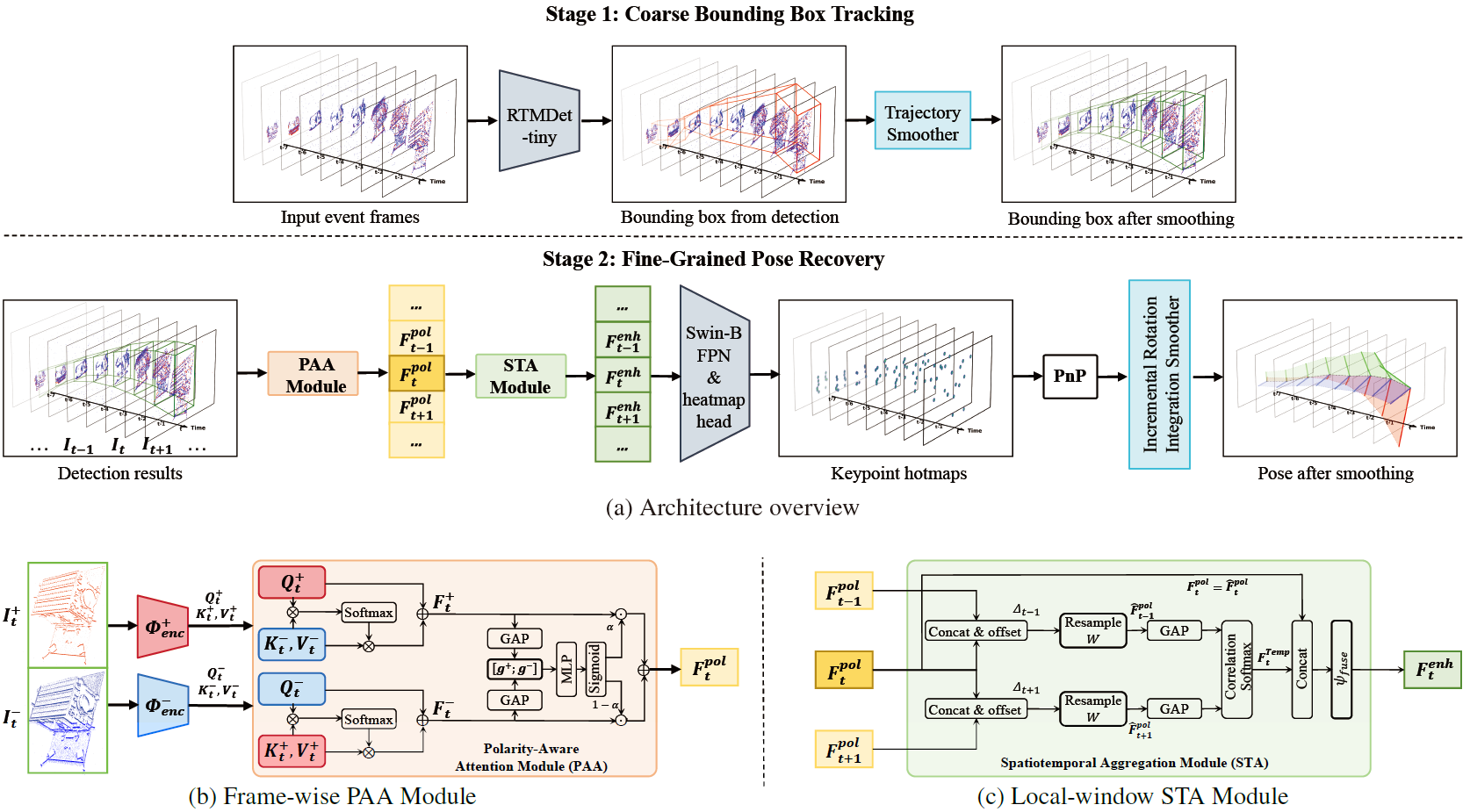

From Spatiotemporal Decoupling to Kinematic Smoothing: A Robust Pipeline for Event-Based Spacecraft Pose Estimation

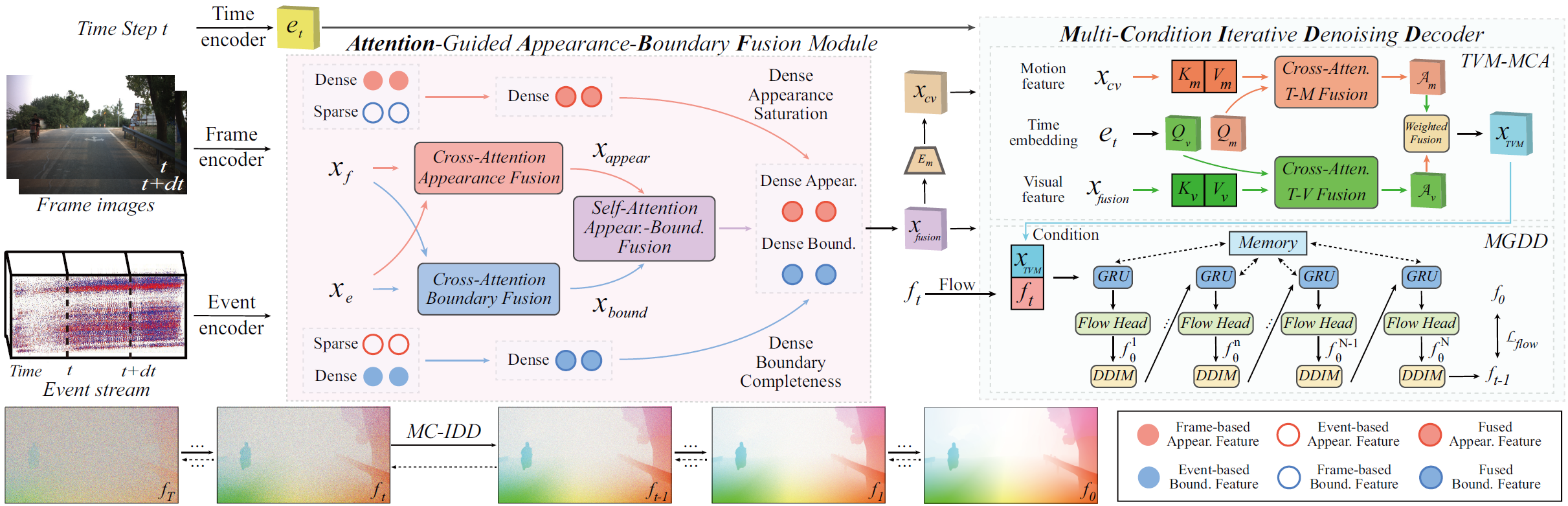

Injecting Frame-Event Complementary Fusion into Diffusion for Optical Flow in Challenging Scenes

STD-GS: Exploring Frame-Event Interaction for SpatioTemporal-Disentangled Gaussian Splatting to Reconstruct High-Dynamic Scene

Bridge Frame and Event: Common Spatiotemporal Fusion for High-Dynamic Scene Optical Flow

Bring Event into RGB and LiDAR: Hierarchical Visual-Motion Fusion for Scene Flow

Exploring the Common Appearance-Boundary Adaptation for Nighttime Optical Flow

Under review

Nighttime Scene Optical Flow: Common Spatio-Temporal Motion Adaptation

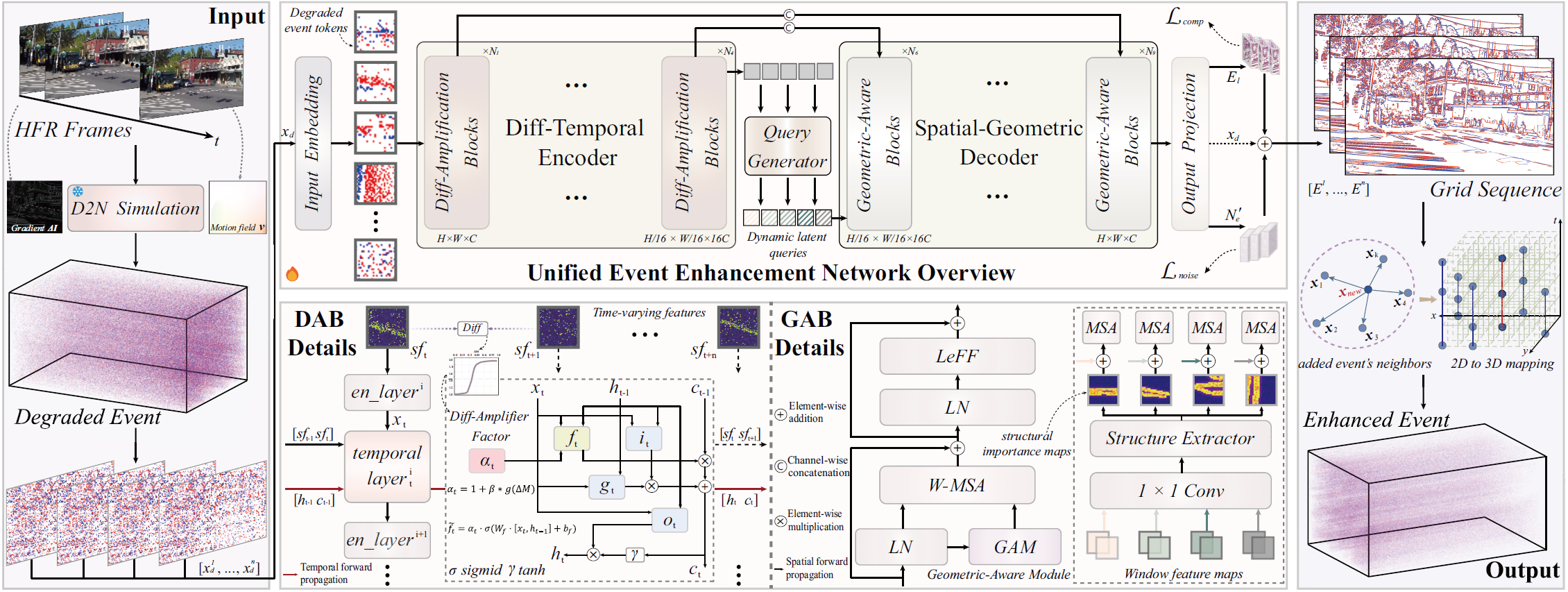

UEE-Net: Unified Event Enhancement Framework for Denoising and Completion

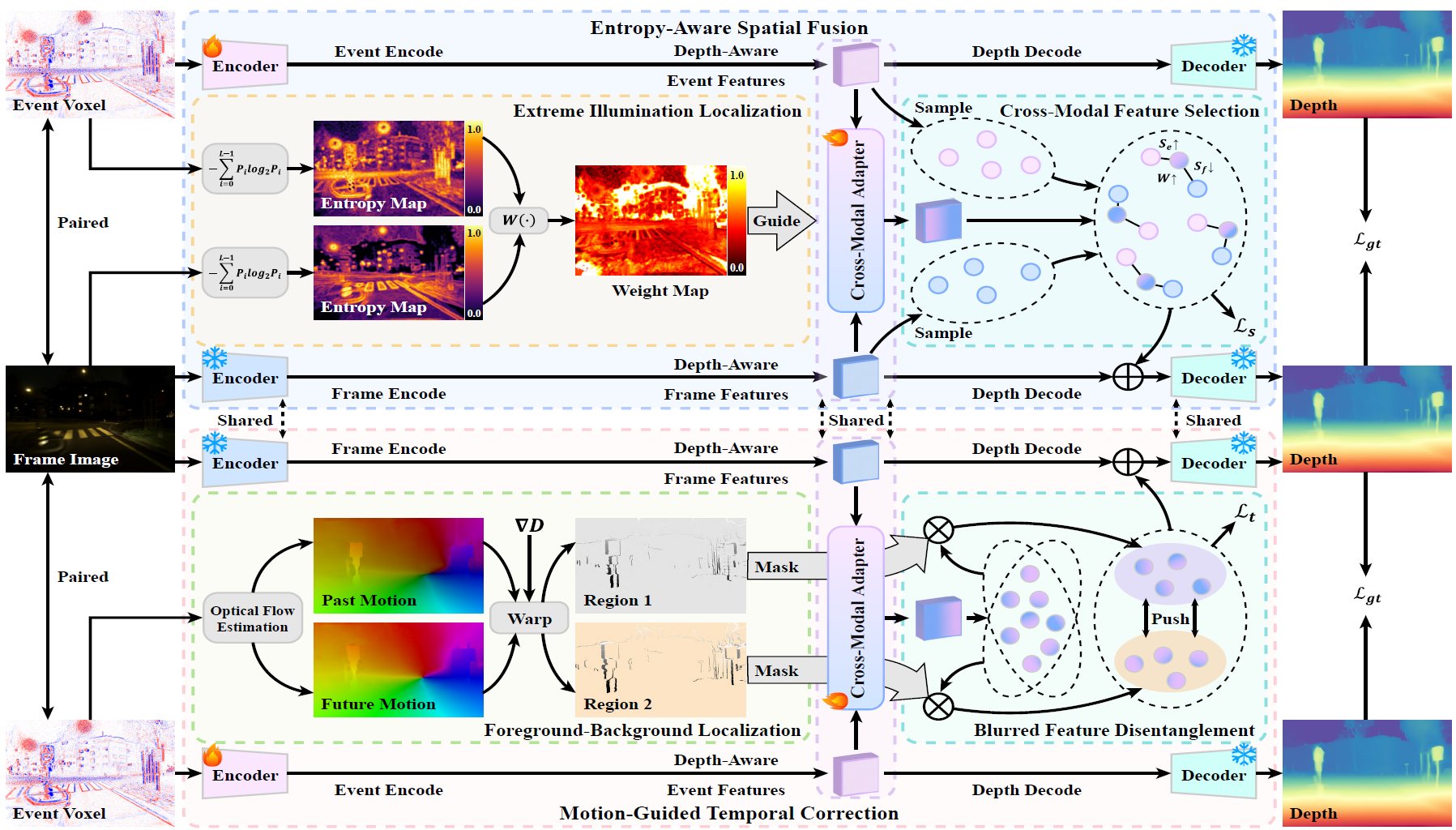

Adapting Depth Anything to Adverse Imaging Conditions with Events

Awards

Awards and honors.

Academic Services

Academic reviewing services.

Journal Reviewers: IJCV, TIP, TCSVT, TMM, RAL.

Conference Reviewers: CVRR, ICCV, ECCV, NIPS, AAAI, ICRA, BMVC.

Other: Guest Editor Assistant of Sensors Special Issue Event-Based Vision and Multimodal Sensor Fusion.